React and AWS S3 - Easy Image Uploading Quick Guide

Easily host images in S3 storage uploading them directly from your React frontend web app.

This walkthrough is broken up into two sections.

- First half - Setting up your S3 bucket in AWS

- Second half - Setting up your React app with S3

If you are familiar with how to set up S3 buckets in AWS, I suggest skipping to the frontend Implementation. You need access to an AWS account for this guide.

HTML inputs with a type of file that accept image/*s offer an easy method for users to upload their photos into your frontend app - check this top example from Mozilla here. But what are our next steps after a user's uploaded their photos?

Here are the steps we'll follow to ensure our user's photos are uploaded to an S3 bucket and ultimately rendered on your React app:

- Create S3 Bucket and Credentials

- Import and configure AWS SDK

- Set up an

onFileUploadfunction to handle multiple files at once - Make

postrequests to S3-provided URLs for each photo we'd like to upload - Serve S3-stored images on our frontend

Create S3 Bucket and Credentials

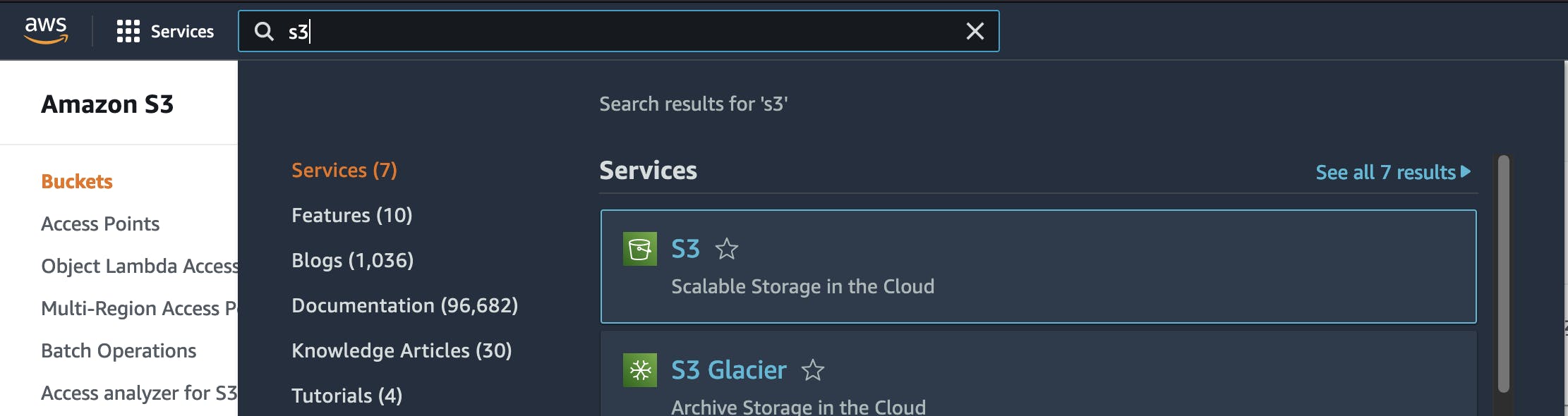

Let's set up our AWS bucket. In your AWS console, navigate to S3 and follow the below steps.

The Bucket

- Create a bucket

- Give that bucket a unique name

- Note the region its in (mine was in us-east-1)

- Uncheck

Block all public accessand acknowledge - Click "Create Bucket"

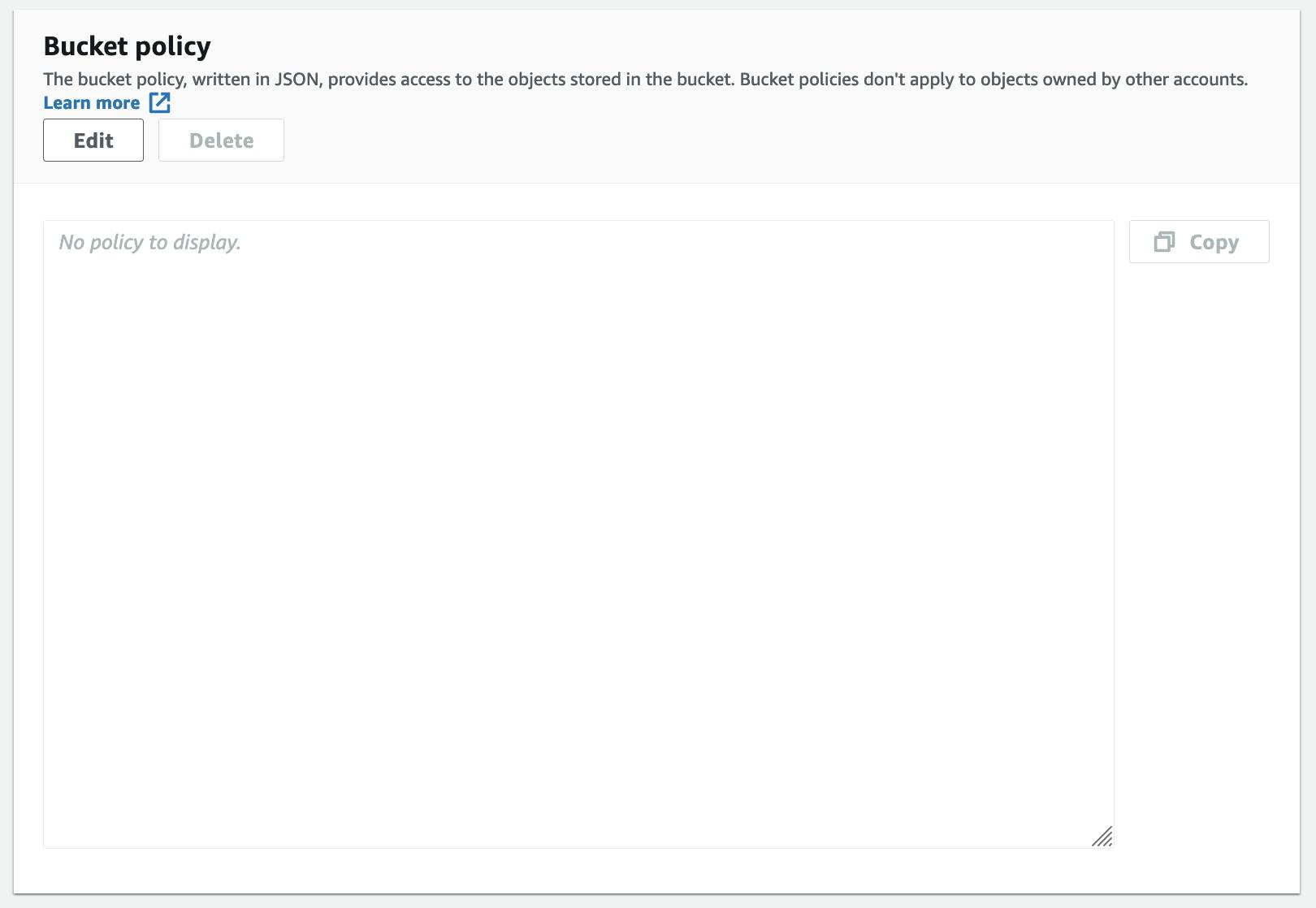

Navigate into your bucket and select the Permissions property, where we'll need to set up your bucket's permissions and CORS policies.

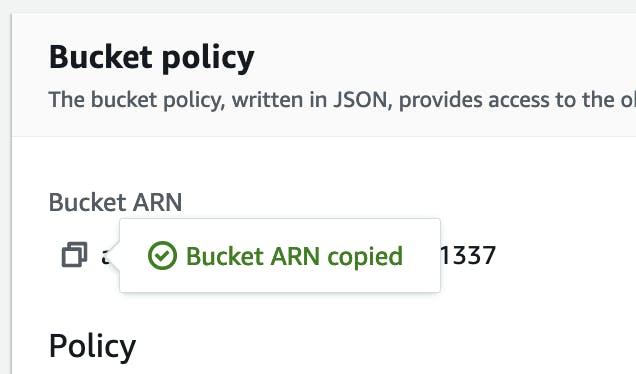

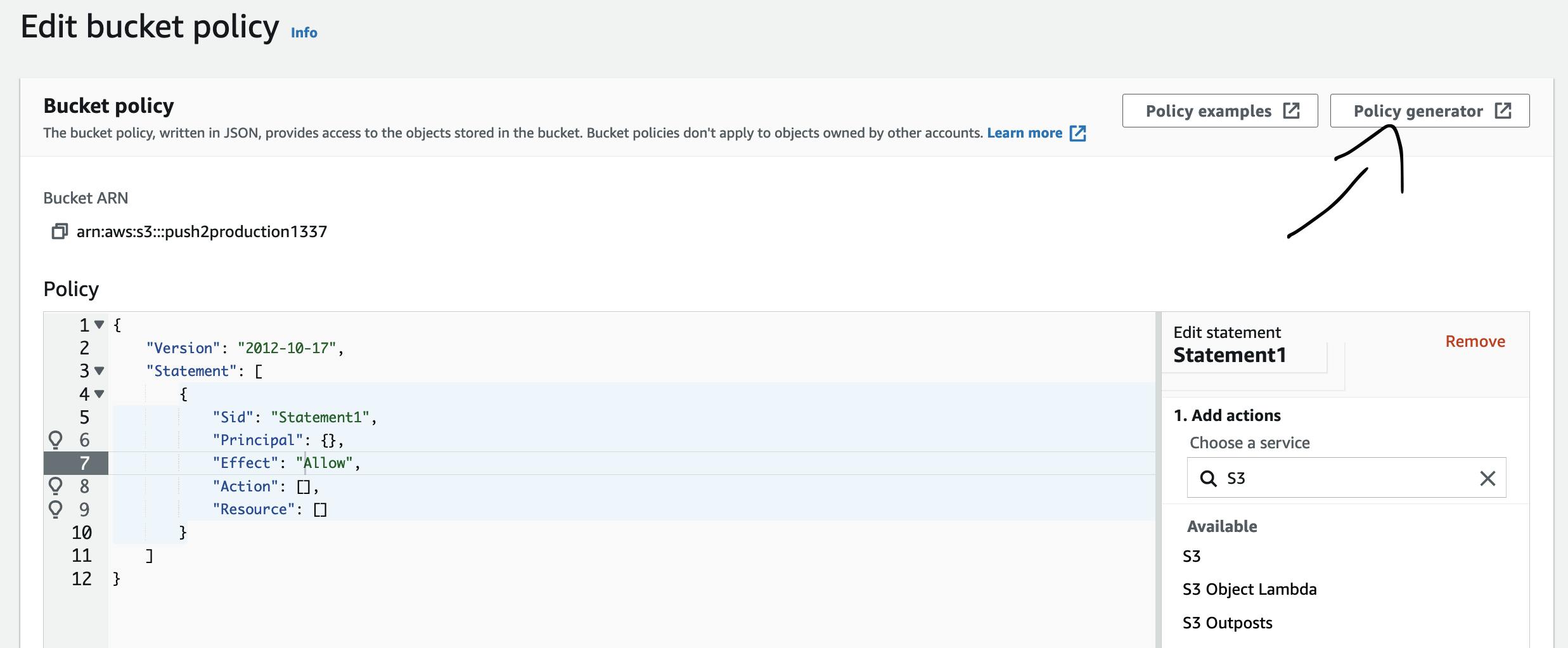

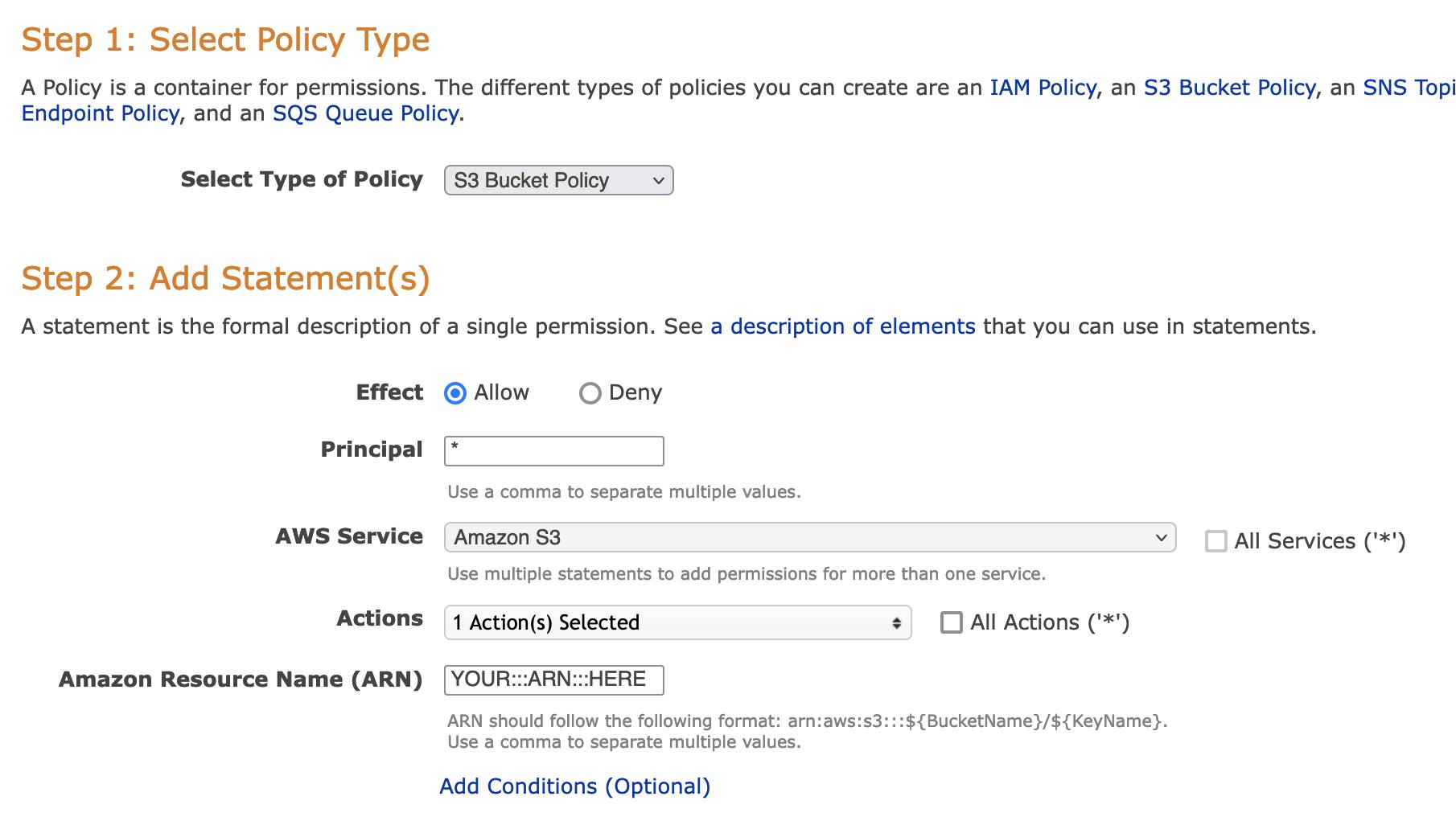

In Bucket Policy, add a new policy statement by selecting edit, copy your bucket's ARN, and then head over to the AWS Policy Generator.

- ARN:

- Policy Generator:

Fill out your policy generator so that it resembles this (note the action I allow is called getObject):

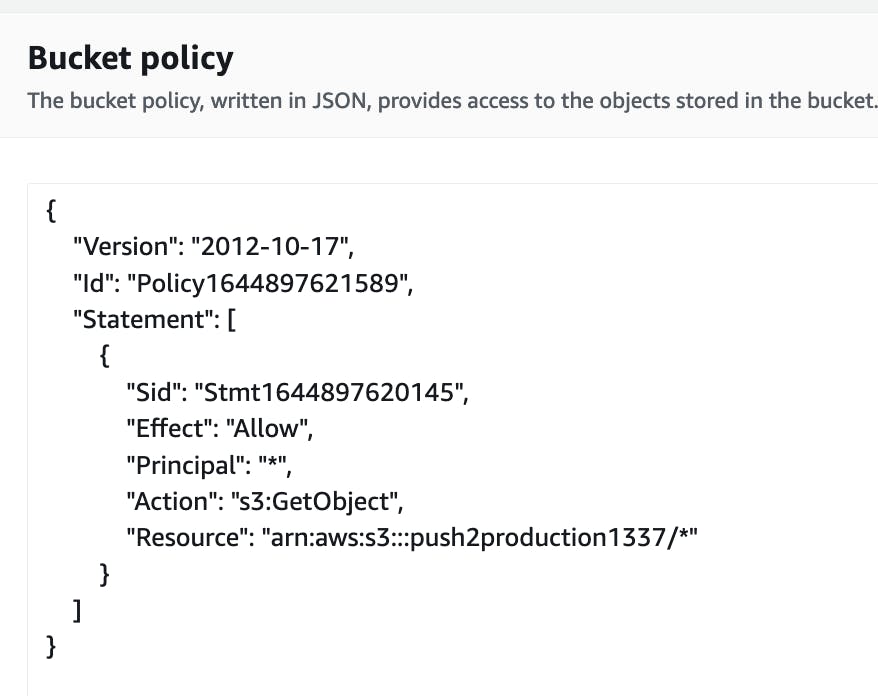

Copy/paste this policy into your S3 bucket's Permissions

Here's what my generated policy looked like after completing:

Copy/paste this into your bucket's CORS options at the bottom of Permissions:

[

{

"AllowedHeaders": [

"*"

],

"AllowedMethods": [

"PUT",

"HEAD",

"GET"

],

"AllowedOrigins": [

"*"

],

"ExposeHeaders": []

}

]

Create Credentials for React <> S3 Interactions

Great! Now our bucket's set up and ready to go. But we need to give our frontend app the credentials.

Let's achieve this by creating an AWS Role that allows our frontend app to perform actions on our S3 bucket.

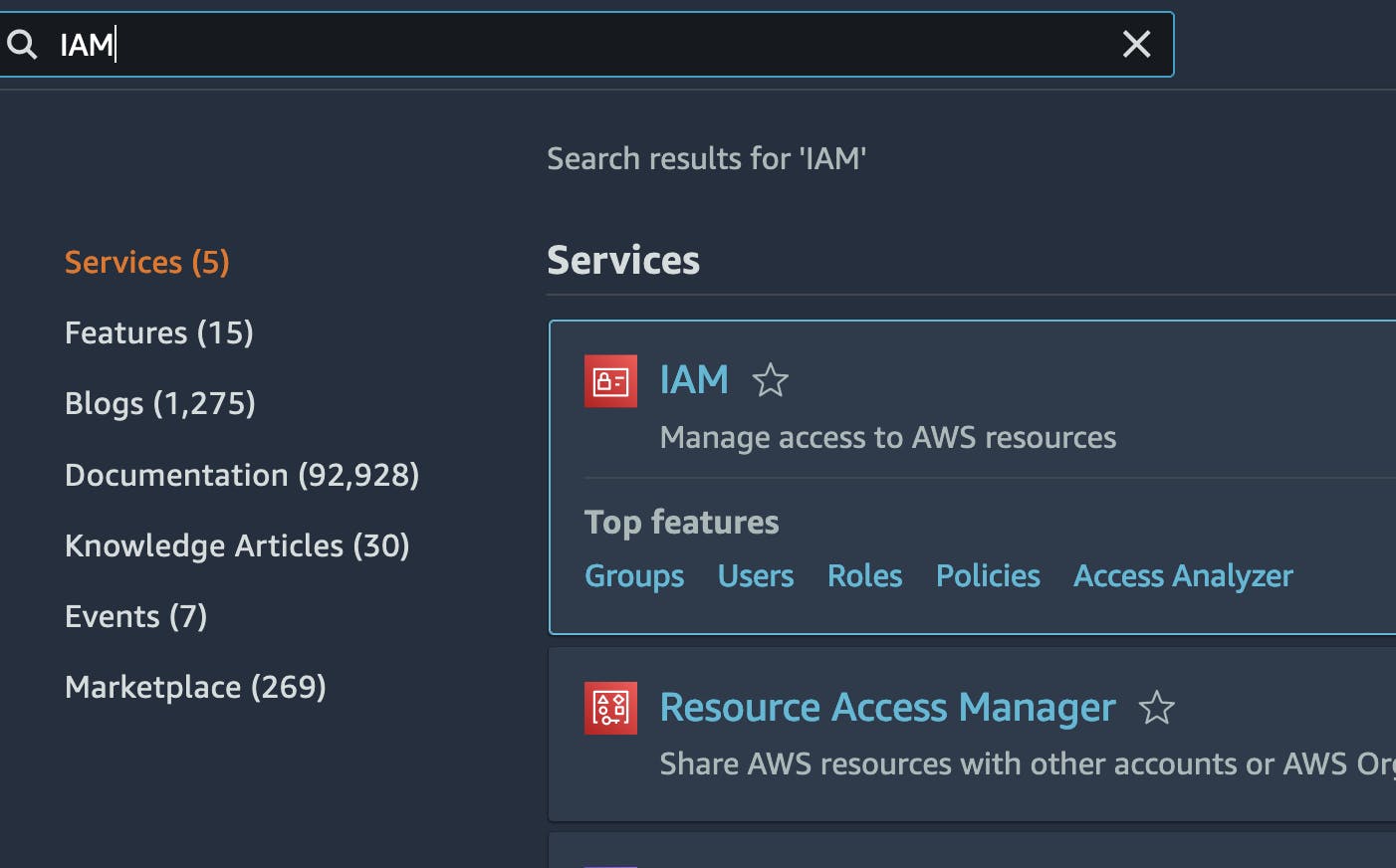

Navigate to IAM (Identity and Access Management):

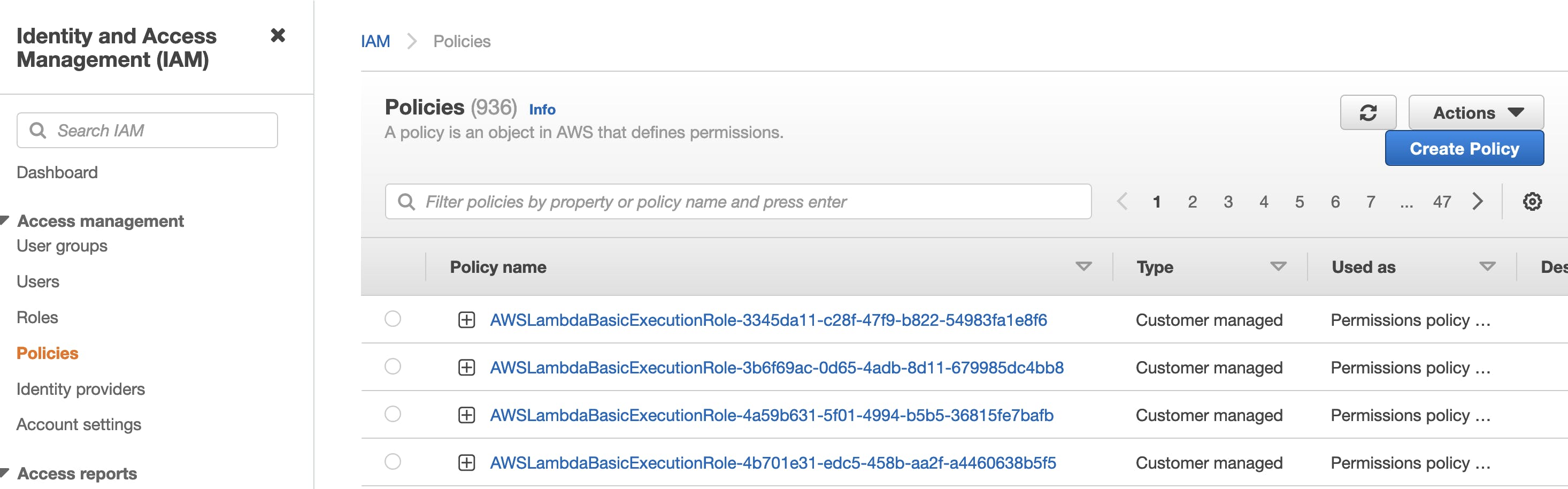

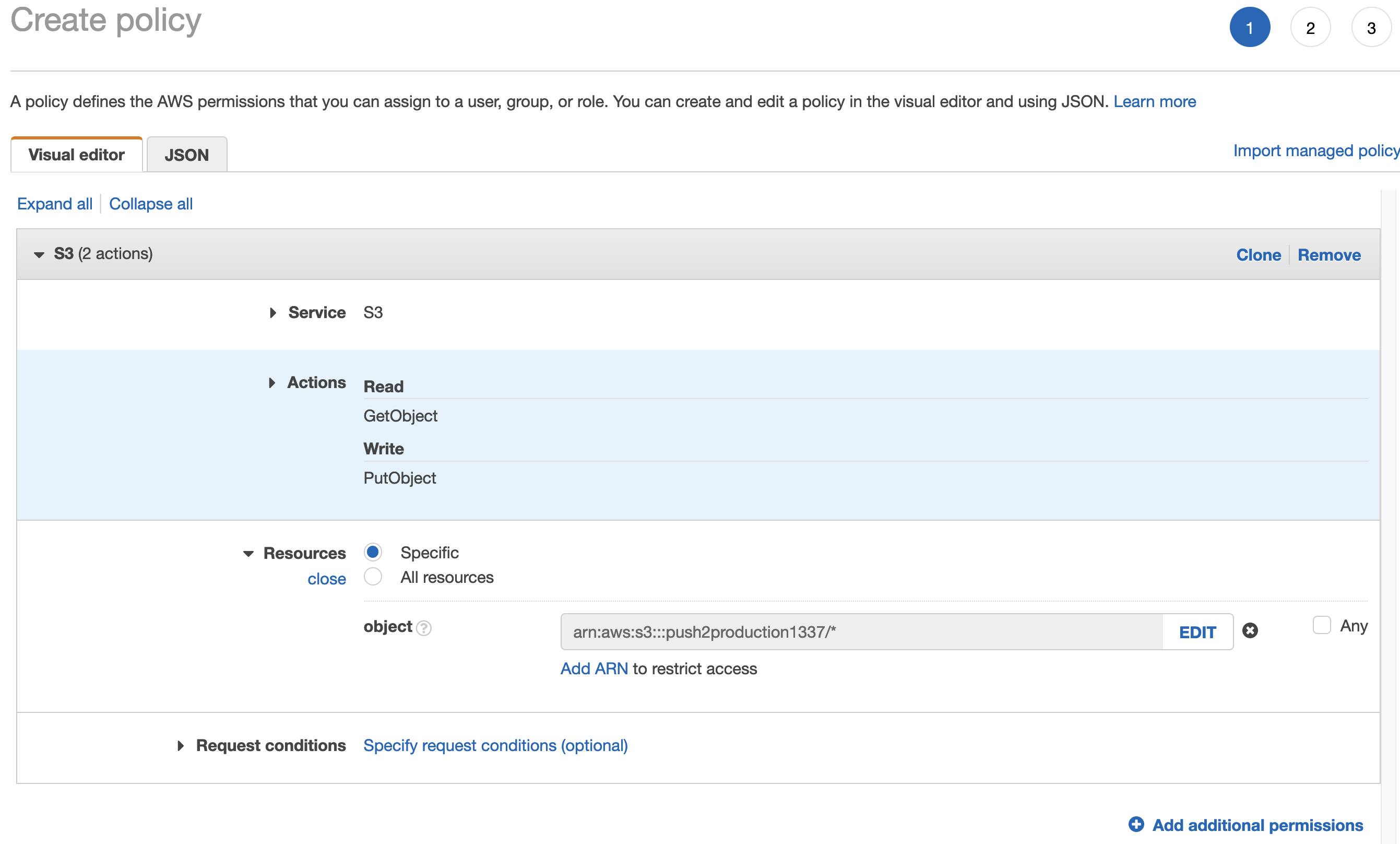

Click Policies on the left-hand side, and inside that page, click Create Policy.

Set up your IAM policy so that it looks as follows (replace push2production1337 with your specific S3 ARN):

Skip tags and give this policy a name that you can identify.

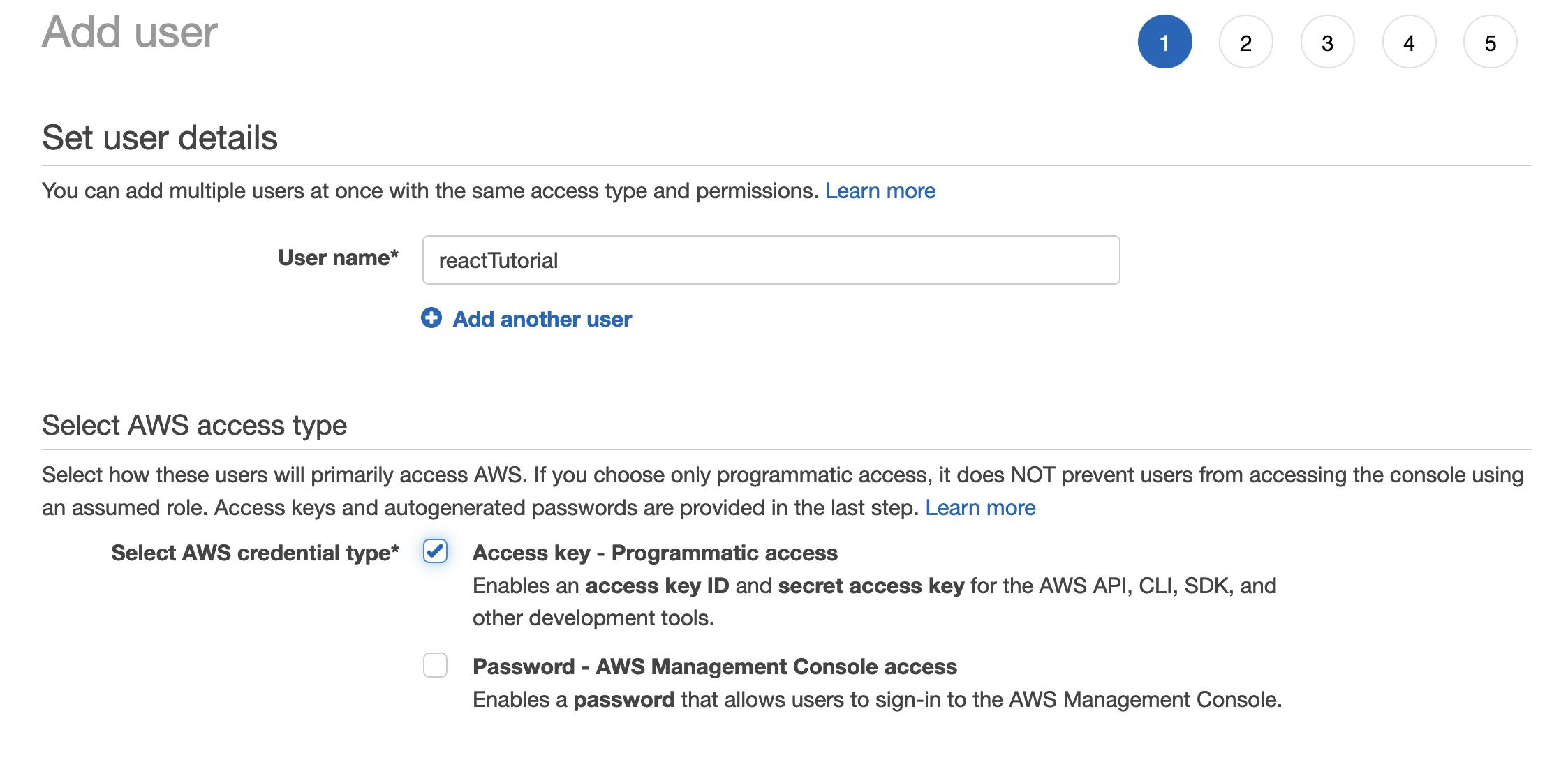

Next, navigate to Users on the lefthand menu and select Add Users. Give your User a name and select Access Key - Programmatic access

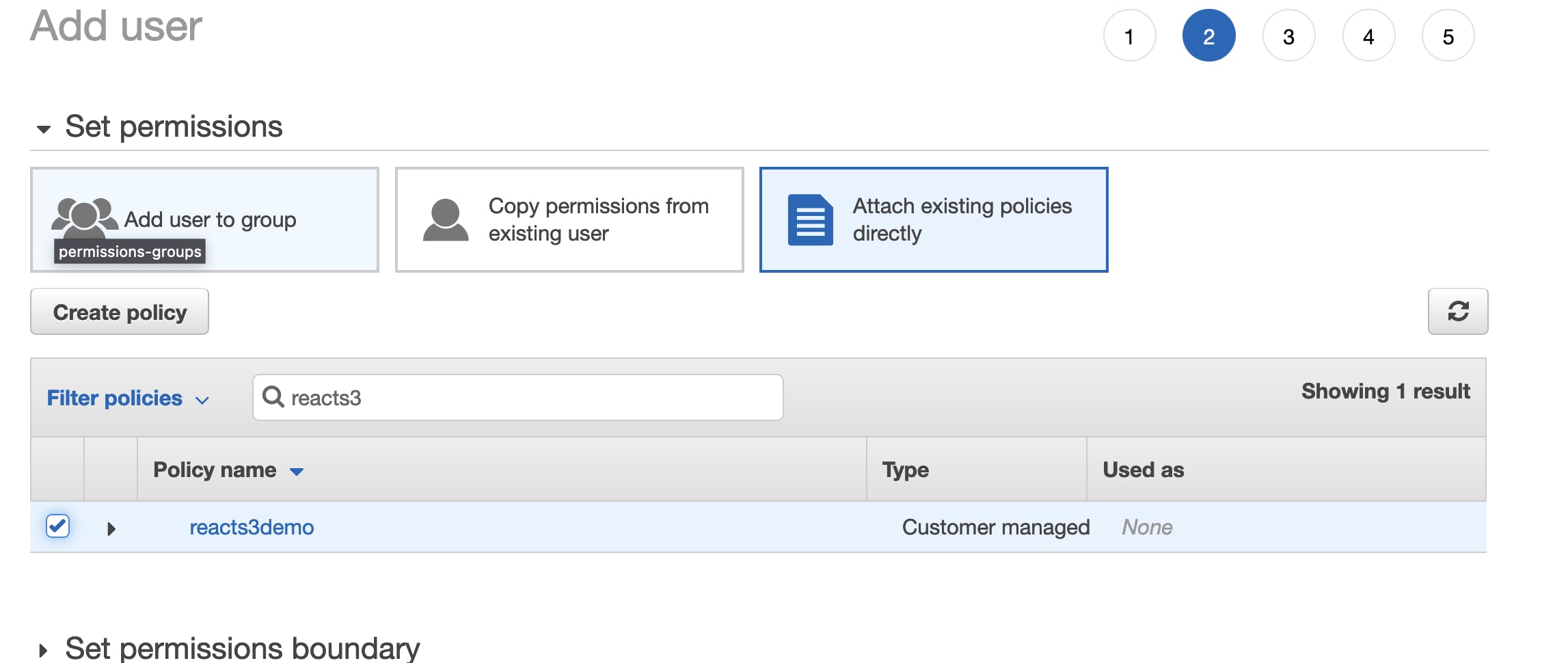

Now attach the policy we previously made to this S3 bucket:

Create the user and copy down the user's Access key ID and Secret Access Key.

And that's it! Now that our S3 bucket has its Policy and CORS set up, and now that we've created an IAM policy and attached that to a User, we have successfully set up our S3 bucket.

Frontend Implementation - Express & S3 Configuration

In this section, we'll focus on configuring our frontend React app to interact with our newly created S3 bucket.

In the terminal, run npm i dotenv aws-sdk to download dotenv and AWS's SDK.

Next, create a .env file in the root directory that will store our S3 credentials.

AWS_ACCESS_KEY_ID=<YOUR-KEY-HERE>

AWS_SECRET_ACCESS_KEY=<YOUR-KEY-HERE>

afterwards, write a create a file called titled s3.js with the following code:

// Importing our installed dependencies

const aws = require('aws-sdk');

const dotenv = require('dotenv').config();

// Configuring our S3 bucket in React

const region = "us-east-1";

const bucketName = "YOUR_BUCKET_NAME_HERE";

const accessKeyId = process.env.AWS_ACCESS_KEY_ID;

const secretAccessKey = process.env.AWS_SECRET_ACCESS_KEY;

const s3 = new aws.S3({

region,

accessKeyId,

secretAccessKey,

signatureVersion: 'v4'

});

// Here, we retrieve a "signed" url from AWS for us to send our image to:

// https://docs.aws.amazon.com/AWSJavaScriptSDK/latest/AWS/S3.html#getSignedUrlPromise-property

function generateUploadURL() {

//Generate a random file name for our photos to be stored at:

const imageName = "image_number_" + Math.random() * 1000;

const params = ({

Bucket: bucketName,

Key: imageName,

Expires: 60

});

return s3.getSignedUrlPromise('putObject', params);

}

module.exports = generateUploadURL;

In your express server, create the following route. Our frontend app will send a get request to this route, which will call the above s3 function. The s3 function will return a "signed URL" that we can use to post photos to.

// GET SECURE URL from AWS:

app.get('/s3Url', (req, res) => {

s3().then(url => {

res.status(200).send(url)

});

})

Frontend Implementation - HTML & Preparing Images for Network Transfer to S3

In our React app, set up an onChange listener attached to our input html that accepts files:

const [imageArray, setImageArray] = useState([])

async function onFileChange (e) {

e.persist();

let arrOfFiles = Object.values(e.target.files);

function getBase64(file) {

const reader = new FileReader();

return new Promise(resolve => {

reader.readAsDataURL(file);

reader.onloadend = () => {

resolve(reader.result);

}

});

};

const promiseArray = [];

arrOfFiles.forEach(file => promiseArray.push(getBase64(file)));

let arrOfBlobs = await Promise.all(promiseArray);

setImageArray([...imageArray].concat(arrOfBlobs));

};

When we upload a photo to our input, onFileChange will take the object holding all photos and turn it into an array (arrOfFiles) of that object's values.

We can now loop through arrOfFiles and push a promise that will resolve into a base64 representation of that file into a promiseArray.

Since promiseArray is an array of promises, we need to await for all images to finish being converted to base64. We can ensure all promises in our array resolve by using Promise.all().

Finally, we store our resolved array of Base64-encoded images in state.

But what's going on in our

getBase64function? Converting our image to Base64 allows us to send an encoded version of our image across the network (to our S3 bucket) without data loss.The

FileReaderis a native browser API that lets us asynchronously read the contents of files, or in our case, images. Since the browser needs time to "read" (aka convert) our images to Base64, this means that we'll be dealing with asynchronous functions.As soon as our

FileReadercompletes its read of our image, anonloadevent is triggered, which fires ouronloadend()method. There, we resolve a promise that we return, which will ultimately resolve in our promiseArray.The FileReader web API is pretty cool! Feel free to read more about it on MDN.

Frontend Implementation - Transferring Photos from our Frontend to the Backend and Rendering Images

Almost there! The following function posts our base64 images to S3 and retrieves a URL that we can use as the source to an html img tag.

//state now holding our Base64 images ...

const [imageArray, setImageArray] = useState([]);

async function onFormSubmit (e) {

e.preventDefault();

e.persist();

// STEP 1: Declare an array to hold promise values of unresolved API calls:

let arrOfS3UrlPromises = [];

// STEP 2: Loop through imgArray (i.e. your state full of base64 images):

imgArrays.forEach(img => {

// For each image, retrieve an S3 URL to upload that image to:

let getUrl = axios({

method: 'GET',

url: 'http://localhost:3000/s3Url'

}).then(data => data.data);

arrOfS3UrlPromises.push(getUrl);

});

// STEP 3: Wait for those axios requests to resolve, giving you the final S3 signed URL array:

let arrOfS3Urls = await Promise.all(arrOfS3UrlPromises);

// STEP 4: Declare an array to hold PUT axios requests to the above URL:

let arrOfS3SuccessPutPromise = [];

// STEP 5: Loop through above S3 signed URLs

arrOfS3Urls.forEach((s3url, index) => {

const base64 = imgArrays[index];

// STEP 6: Use the Buffer object (from Node, more information below)

const base64Data = new Buffer.from(base64.replace(/^data:image\/\w+;base64,/, ""), 'base64');

// STEP 7: Post image to S3

let successCall = axios({

method: 'PUT',

url: s3url,

headers: {

'Content-Type': 'image/jpeg',

'Content-Encoding': 'base64'

},

data: base64Data

});

arrOfS3SuccessPutPromise.push(successCall);

});

let arrOfS3SuccessPuts = await Promise.all(arrOfS3SuccessPutPromise);

// STEP 8: Once the above PUT requests resolve, arrOfS3SuccessPuts will contain all img URLs.

// This map returns the exact URL we can use as an img tag's source:

let s3photoUrlsArray = arrOfS3SuccessPuts.map(s3url => {

// This map returns the exact URL we can use as an img tag's source:

return s3url.config.url.split('?')[0];

});

};

If you're curious about step 6, we're essentially stripping out characters from our Base64 image using regular expressions, and leveraging Node's Buffer.from static method to return a new Buffer array that uses Base64 encoding. More reading on this here.

And that's it!

If you've followed this far, you should now be able to POST images to your newly created S3 bucket.

I hope this guide was helpful and I'm always open to feedback. Cheers!